Amazon AWS provides a managed Kubernetes Service that reduces the Cluster maintenance for the developers. Quite recently, I asked myself on how the deployment process also can be simplified. There are many tools around like Tekton with which deployment pipelines can be built in a Kubernetes native way. However setting up such tools can be an overhead if you already have a CI Pipeline tool like Gitlab.

Within this article, I will show you how easy you can deploy your applications to your Kubernetes Cluster. We will model a common deployment process:

- Linting & Unit-Testing

- Deploy docker-image (e.g. to AWS Elastic Container Regstry)

- Deploy to Kubernetes Cluster (e.g. via helm deployment)

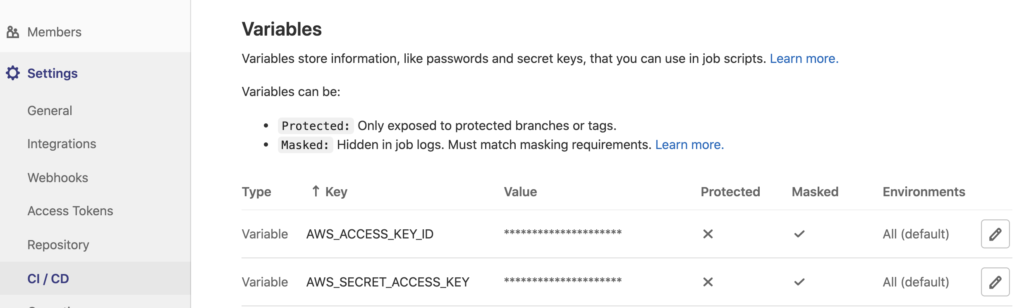

The first CI stage takes care of static code analysis that does not require any AWS components. For the following steps, we need AWS access, which means that we need to setup AWS credentials in Gitlab:

The credentials enable us to push our images to ECR and connect to the EKS cluster. In the first place, we need to push the Docker Image to our registry:

aws ecr get-login --no-include-email --registry-ids $AWS_ECR_ACCOUNT | sh docker build --rm=true -t $IMAGE_NAME . && docker push $IMAGE_NAME

After that, we need to tell the Kubernetes Cluster to use the new docker image and some additional settings of our release. For this, we firstly need to setup the EKS Cluster inside of our CI pipeline:

aws eks --region eu-central-1 update-kubeconfig --name mycluster

The newly added kubernetes context enables us now to deploy our application (e.g. via helm):

helm dependency update ./helm helm upgrade --install myapp ./helm --wait

That’s all we need to do, pretty easy right? 🙂

Security hint: this approach requires a lot of permissions and if you need a more secure approach, I would suggest looking into GitOps ;-)

You can see the whole pipeline YAML here:

stages:

- test

- build

- deploy

variables:

IMAGE_NAME: $AWS_ECR_ACCOUNT.dkr.ecr.eu-central-1.amazonaws.com/myapp:${CI_COMMIT_REF_SLUG}

lint:

stage: test

image: node:12-alpine

script:

- npm ci

- npm run lint

unit-test:

stage: test

image: node:12-alpine

script:

- npm ci

- npm test

build:

stage: build

image: docker:stable

services:

- docker:18-dind

variables:

DOCKER_DRIVER: overlay2

script: |

# install AWS CLI

apk add --no-cache python3 py3-pip \

&& pip3 install --upgrade pip \

&& pip3 install awscli \

&& rm -rf /var/cache/apk/*

# build and store image

aws ecr get-login --no-include-email --registry-ids $AWS_ECR_ACCOUNT | sh

docker build --rm=true -t $IMAGE_NAME . && docker push $IMAGE_NAME

deploy:

stage: deploy

image: alpine/helm

script: |

# install AWS CLI

apk add --no-cache python3 py3-pip \

&& pip3 install --upgrade pip \

&& pip3 install awscli \

&& rm -rf /var/cache/apk/*

# add EKS cluster

aws eks --region eu-central-1 update-kubeconfig --name mycluster

# deploy new release

helm dependency update ./helm

helm upgrade --install myapp ./helm --wait